How We Built Automated Competitor Monitoring for a B2B SaaS Company

Safi Ur Rehman

Founder, Vynapse

One of our clients runs a B2B SaaS in a niche industry. Their problem was simple but painful: they kept finding out about competitor changes weeks late. A competitor drops pricing, and their sales team only learns about it when a prospect mentions it on a call. A competitor launches a new feature, same thing.

This is not a unique problem. Most B2B companies track competitors manually. Someone checks a few websites once a month, maybe scans LinkedIn, maybe subscribes to a newsletter. It is inconsistent, incomplete, and always reactive. By the time you know what happened, your competitor has already moved the conversation.

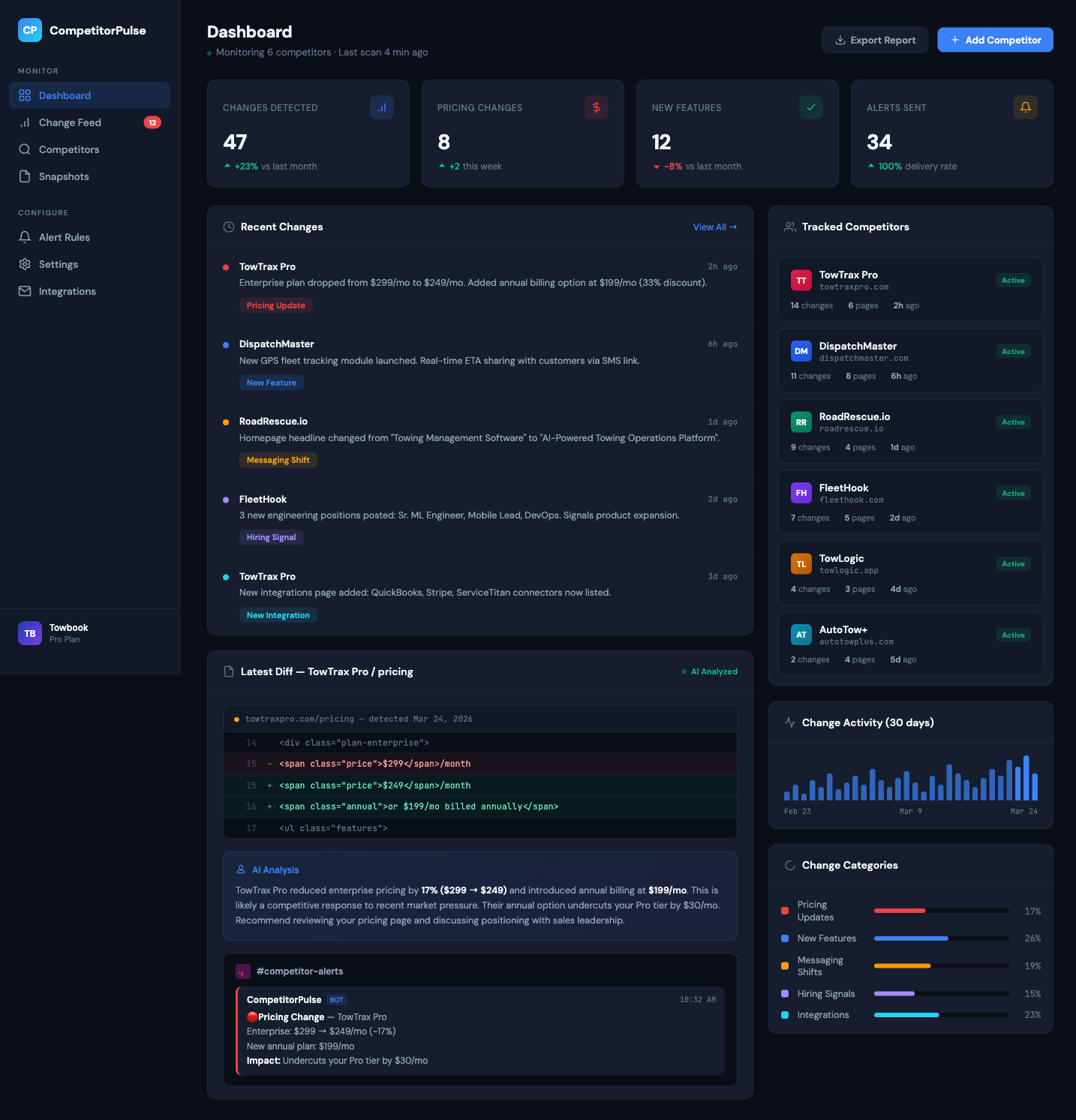

So we built them an automated competitor monitoring pipeline. It has been running for about two months now, and their head of sales told me it changed how they prep for calls. They walk in knowing exactly what the competitor announced last week instead of getting blindsided.

Here is the full breakdown of what we built, how it works, and the parts that took the most iteration.

The Problem in Detail

Before we started building, we spent time understanding exactly how the team was tracking competitors. The answer was: barely.

Their process looked like this:

- The marketing lead would manually check three or four competitor websites every couple of weeks. Sometimes weekly. Sometimes not for a month.

- The sales team relied on prospects and customers to tell them about competitor moves. That is not competitive intelligence. That is hoping someone else does your research for you.

- Product would occasionally Google competitor names and scan for announcements, but there was no system and no consistency.

- When someone did find something, it was shared in a Slack message that got buried within hours.

The result was predictable. They were consistently two to four weeks behind on competitor pricing changes, feature launches, messaging pivots, and even hiring patterns that signaled where a competitor was investing next. In a niche B2B market where deals cycle over months and relationships matter, walking into a call without knowing what the other side already knows is a real disadvantage.

What We Built

The system does four things in sequence: scrape, diff, classify, and alert.

1. Scraping

We built scrapers that hit competitor websites on a schedule. Not just the homepage. We target the pages where meaningful changes happen:

- Pricing pages. The most obvious one. Any change to pricing tiers, feature gating, or published pricing is an immediate signal.

- Feature and product pages. New features, updated descriptions, changed positioning.

- Changelogs and release notes. If a competitor publishes updates publicly, we capture every entry.

- Job postings. This one is underrated. If a competitor suddenly posts five machine learning engineering roles, that tells you something about their product roadmap. If they are hiring enterprise sales reps in a new region, that tells you about their go-to-market strategy.

- Blog and news sections. Partnership announcements, case studies, thought leadership that reveals strategic direction.

We used Playwright for the scraping layer. This was a deliberate choice. Many competitor pages are JavaScript-heavy single-page applications. Basic HTTP requests or simple HTML parsers miss half the content because it loads dynamically. Playwright runs a real browser, waits for content to render, and captures what a human would actually see on the page. It is heavier than a simple HTTP call, but it is significantly more reliable for modern web applications.

Each scrape produces a snapshot, a full text capture of the page content at that point in time. We store every snapshot in Supabase.

2. Diffing

Every time a new snapshot comes in, it gets compared against the previous snapshot for that page. The system identifies what changed: added text, removed text, modified sections.

This sounds straightforward, and at a technical level it is. But the raw diff output is where the first major challenge hit. A raw diff of a web page is noisy. Copyright year updates, minor CSS class changes that affect rendered text, footer modifications, cookie banner tweaks, rotating testimonials. All of these show up as "changes" in a diff, and none of them are strategically meaningful.

We spent a significant amount of time tuning the diff logic to filter out cosmetic noise. The system ignores changes below a certain character threshold, strips out known dynamic elements (timestamps, session-specific content, rotating banners), and applies structural comparison rather than pure text comparison so that reformatting without content changes does not trigger false positives.

3. AI Classification

This is where the system goes from "here is what changed on a webpage" to "here is what it means for your business." Raw diffs are useless for non-technical people. A sales rep does not want to see that line 47 of a pricing page changed from "$99/mo" to "$79/mo." They want to know: "Competitor X dropped their mid-tier price by 20%, which undercuts our starter plan."

We built a classification layer using the Claude API that does two things:

- Categorizes every change. The system classifies each meaningful diff into one of several categories: pricing update, new feature, feature removal, messaging shift, new integration, new partnership, hiring signal, or other. This categorization lets the team quickly filter for the changes that are most relevant to their role.

- Generates a strategic summary. For each change, the AI writes a short, plain-language summary of what changed and why it might matter. Not a technical diff. A business-relevant interpretation. "Competitor Y added SOC 2 compliance to their enterprise tier feature list. This suggests they are targeting regulated industries and may compete for the healthcare vertical you entered last quarter."

The classification prompt took several rounds of iteration to get right. Early versions were either too generic ("a pricing change was detected") or too speculative ("this could indicate a pivot to downmarket"). We landed on a prompt structure that anchors the summary in what specifically changed, states the category, and offers one sentence of context about potential implications without overreaching.

4. Slack Alerts

The final step is delivery. Every classified change fires a Slack notification to a dedicated channel. The alert includes the competitor name, the category tag, the AI-generated summary, and a link to the actual page so anyone who wants to dig deeper can verify.

We set up channel routing so pricing changes also ping the sales team directly, product changes go to the product channel, and hiring signals go to the leadership channel. Not every change is relevant to every person, and flooding a single channel with everything would just recreate the noise problem we were trying to solve.

The Stack

The technology choices were driven by simplicity and reliability, not novelty. This system needs to run quietly in the background every day without breaking. Boring, reliable infrastructure was the right call.

- Supabase for storage and scheduling logic. Snapshots, diffs, and classified results all live in Supabase tables. We also use Supabase's edge functions to orchestrate the pipeline on a schedule.

- Playwright for web scraping. Handles JavaScript-rendered pages, which is most of the modern web. More resource-intensive than basic HTTP scraping but dramatically more reliable.

- Claude API for classification and summarization. We tested several models and Claude consistently produced the most useful, well-calibrated summaries. It was especially strong at distinguishing meaningful changes from noise, which is the core challenge of the entire system.

- Slack Webhooks for delivery. Simple, reliable, and the team already lives in Slack. No need to build a custom dashboard when the information needs to arrive where people are already looking.

The Hard Part: Reducing Noise

If I had to pick the single part of this project that took the most iteration, it was noise reduction. The scraping, diffing, and classification were technically interesting but relatively straightforward to build. Getting the system to only alert on things that actually matter? That took weeks of tuning.

Nobody wants 50 alerts a day because a footer copyright year changed or a testimonial carousel rotated. The first version of the system was unusably noisy. We were getting dozens of alerts per day, and most of them were meaningless.

Here is what we did to fix it:

- Character threshold filtering. Changes below a minimum character count are ignored entirely. A single word change in a footer is not worth alerting on.

- Dynamic element exclusion. We maintain a list of CSS selectors and content patterns to exclude from comparison. Timestamps, session tokens, cookie banners, live chat widgets, rotating testimonials, and similar dynamic elements that change on every page load.

- Structural diffing over text diffing. Instead of comparing raw text, we compare the semantic structure of the page. If the same content is present but the HTML wrapper changed (a common occurrence during frontend deployments), that does not count as a meaningful change.

- AI-powered relevance scoring. After the classification step, the AI also assigns a relevance score. Changes below the threshold get logged but do not trigger an alert. This catches the edge cases that rule-based filtering misses, like when a competitor updates their "About Us" page with minor wording tweaks that do not signal anything strategic.

- Feedback loop. The Slack alerts include reaction buttons. If someone marks an alert as irrelevant, that feedback gets stored and used to improve the relevance scoring over time. The system gets smarter the more the team uses it.

After about two weeks of active tuning, we got the system from 40+ alerts per day down to 3 to 8 meaningful alerts per week. That is the sweet spot. Enough to keep the team informed, few enough that every alert actually gets read.

What Changed for the Client

The system has been running for about two months. Here is what the client has reported:

- Sales calls are better prepared. The head of sales told me this was the single biggest impact. Reps now walk into calls knowing exactly what competitors announced in the last week. When a prospect says "we are also looking at Competitor X," the rep can immediately reference the competitor's latest pricing, new features, or limitations. That level of preparedness builds trust.

- Pricing responses are faster. When a competitor dropped pricing on their mid-tier plan, the sales team knew within hours instead of finding out weeks later from a prospect. They were able to prepare talking points and adjust their positioning before the next sales call.

- Product roadmap is more informed. The product team uses the feature change alerts and hiring signals to understand where competitors are investing. This does not dictate their roadmap, but it gives them context they did not have before.

- Marketing messaging stays sharp. When a competitor shifts their positioning (for example, from "all-in-one platform" to "enterprise-grade security"), the marketing team can adjust their own differentiation messaging proactively instead of discovering the shift months later.

What We Learned

Job postings are an underrated signal

When we first pitched tracking job postings, the client was skeptical. "How does knowing they are hiring help us?" But job postings are one of the most revealing public signals a company puts out. A competitor posting for five machine learning engineers tells you they are investing in AI. A competitor hiring in Germany tells you they are expanding to the EU market. A competitor posting for a "Head of Enterprise Sales" when they have historically been self-serve tells you their go-to-market is shifting. These are strategic signals that pricing pages and changelogs do not reveal.

The AI classification layer is what makes or breaks the system

Without AI classification, this would just be a web scraping tool with a Slack integration. The world does not need another one of those. What makes this system useful is that it translates raw web changes into business-relevant intelligence. The difference between "line 23 changed on competitor's pricing page" and "Competitor X reduced their mid-tier price by 20%, undercutting your starter plan" is the difference between a tool nobody uses and one that changes how a team operates.

Simple delivery beats fancy dashboards

We considered building a custom dashboard with charts, timelines, and trend analysis. We are glad we did not. The team lives in Slack. Information that arrives in Slack gets read. Information that lives in a separate dashboard gets ignored. We could always add a dashboard later if the team wants historical analysis, but for real-time competitive awareness, meeting people where they already are is the right approach.

Noise reduction is not a feature. It is the product.

Anyone can build a web scraper that detects changes. The value is entirely in what you choose not to alert on. If every change triggers a notification, people stop reading notifications. The system becomes background noise and then it becomes shelfware. The weeks we spent tuning noise reduction were not polish. They were the core engineering of the entire project.

Who This Works For

This type of system is not limited to B2B SaaS. The same approach applies to any business that operates in a competitive market where public signals matter:

- E-commerce businesses tracking competitor pricing, product launches, and promotions

- Recruitment agencies monitoring competitor job boards and service offerings

- Financial services firms tracking competitor product changes and rate adjustments

- Marketing agencies monitoring competitor campaigns, case studies, and positioning

- Any business in a market where knowing what the other side is doing is a material advantage

The total build time for this project was about three weeks, including all the noise reduction tuning. The ongoing cost is minimal: Supabase hosting, Playwright compute, and Claude API calls. For a system that fundamentally changes how a team understands their competitive landscape, the ROI is hard to argue with.

Key Takeaways

- Most B2B teams track competitors manually and inconsistently. Automation turns competitive intelligence from a monthly chore into a continuous stream.

- The four-step pipeline (scrape, diff, classify, alert) transforms raw web changes into business-relevant intelligence.

- Playwright is essential for scraping modern, JavaScript-heavy web applications. Basic HTTP requests miss too much.

- AI classification is what separates a useful intelligence tool from a noisy web scraper. Without it, you are just shipping diffs to Slack.

- Noise reduction is the hardest and most important part. The value of the system is in what it does not alert on.

- Job postings are one of the most revealing public competitive signals. Track them.

- Deliver insights where people already are. Slack beats a custom dashboard for real-time competitive awareness.

Want to stop finding out about competitor moves from your prospects?

If you are tired of being the last to know what your competitors are doing, let's talk. I will look at your competitive landscape and give you an honest assessment of whether an automated monitoring system makes sense for your situation, and what it would take to build one.

Book a Free Discovery CallOr email us directly at info@vynapse.ai

Safi Ur Rehman

Founder of Vynapse. Building production-grade AI systems for businesses. Previously delivered AI solutions at Deloitte Digital, Checkout.com, and Careem.

Book a free AI assessment call